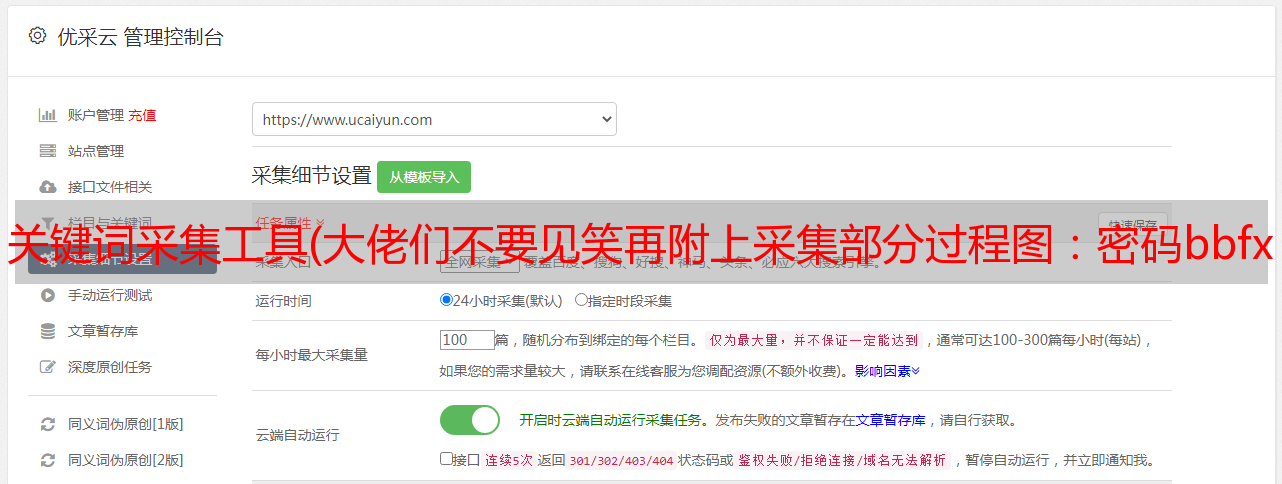

关键词采集工具(大佬们不要见笑再附上采集部分过程图:密码bbfxbbfx)

优采云 发布时间: 2022-03-20 01:18关键词采集工具(大佬们不要见笑再附上采集部分过程图:密码bbfxbbfx)

先粘贴代码。各位大佬别笑

#站长工具长尾关键词挖掘

# -*- coding=utf-8 -*-

import requests

from lxml import etree

import re

import xlwt

import time

headers = {

'Cookie':'UM_distinctid=17153613a4caa7-0273e441439357-36664c08-1fa400-17153613a4d1002; CNZZDATA433095=cnzz_eid%3D1292662866-1594363276-%26ntime%3D1594363276; CNZZDATA5082706=cnzz_eid%3D1054283919-1594364025-%26ntime%3D1594364025; qHistory=aHR0cDovL3N0b29sLmNoaW5hei5jb20vYmFpZHUvd29yZHMuYXNweCvnmb7luqblhbPplK7or43mjJbmjph8aHR0cDovL3Rvb2wuY2hpbmF6LmNvbS90b29scy91cmxlbmNvZGUuYXNweF9VcmxFbmNvZGXnvJbnoIEv6Kej56CB',

'User-Agent':'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/78.0.3904.108 Safari/537.36',

'Accept':'Accept: text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3',

"Connection": "keep-alive",

"Accept-Encoding": "gzip, deflate, br",

"Accept-Language": "zh-CN,zh;q=0.9"

}

#查询关键词是否能找到相关的关键字

def search_keyword(keyword):

data={

'kw': keyword,

}

url="http://stool.chinaz.com/baidu/words.aspx"

html=requests.post(url,data=data,headers=headers).text

# print(html)

con=etree.HTML(html)

key_result=con.xpath('//*[@id="pagedivid"]/li/div/text()')

try:

if(key_result[0] == "抱歉,未找到相关的数据。") :

print('\n')

print("没有找到相关长尾词,请换一个再试。")

return False

except:

return True

#获取关键词页码数和记录条数

def get_page_number(keyword):

data = {

'kw': keyword,

}

url = "http://stool.chinaz.com/baidu/words.aspx"

html = requests.post(url, data=data, headers=headers).text

# print(html)

con = etree.HTML(html)

page_num = con.xpath('//*[@id="pagedivid"]/div/ul/li[7]/text()')

page_numberze = r'共(.+?)页'

page_number = re.findall(page_numberze, page_num[0], re.S)

page_number = page_number[0]

# print(page_number)

print('准备开始挖掘关键词'+ keyword +'的长尾关键词')

return page_number

# return 1 #测试用,请返回1。。

#获取关键词数据

def get_keyword_datas(keyword,page_number):

datas_list = []

for i in range(1,page_number+1):

print(f'正在采集第{i}页关键词挖掘数据...')

print('\n')

url = "https://data.chinaz.com/keyword/allindex/{0}/{1}".format(keyword,i)

html = requests.get(url,headers=headers).text

# print(html)

con = etree.HTML(html)

key_words = con.xpath('//*[@id="pagedivid"]/ul/li[2]/a/text()') # 关键词

# print(key_words)

overall_indexs = con.xpath('//*[@id="pagedivid"]/ul/li[3]/a/text()') # 全网指数

chagnwei_indexs = con.xpath('//*[@id="pagedivid"]/ul/li[4]/a/text()') # 长尾词数

jingjias = con.xpath('//*[@id="pagedivid"]/ul/li[5]/a/text()') # 竞价数

collections = con.xpath('//*[@id="pagedivid"]/ul/li[9]/text()') # 收录量 //*[@id="pagedivid"]/ul[3]/li[9]

jingzhengs= con.xpath('//*[@id="pagedivid"]/ul/li[11]/text()') # 竞争度 //*[@id="pagedivid"]/ul[3]/li[11]

data_list = []

for key_word, overall_index, chagnwei_index, jingjia, collection, jingzheng in zip(key_words, overall_indexs, chagnwei_indexs, jingjias, collections, jingzhengs):

data = [

key_word,

overall_index,

chagnwei_index,

jingjia,

collection,

jingzheng,

]

print(data)

data_list.append(data)

datas_list.extend(data_list) #合并关键词数据

print('\n')

time.sleep(3)

return datas_list

#保存关键词数据为excel格式

def bcsj(keyword,data):

workbook = xlwt.Workbook(encoding='utf-8')

booksheet = workbook.add_sheet('Sheet 1', cell_overwrite_ok=True)

title = [['关键词', '全网指数', '长尾词数', '竞价数', '收录量', '竞争度']]

title.extend(data)

#print(title)

for i, row in enumerate(title):

for j, col in enumerate(row):

booksheet.write(i, j, col)

workbook.save(f'{keyword}.xls')

print(f"保存数据为 {keyword}.xls 成功!")

if __name__ == '__main__':

keyword = input('请输入关键词>>')

print('正在查询,请稍后...')

result=search_keyword(keyword)

if result:

page_number=get_page_number(keyword)

print('一共找到' + page_number + '页')

input_page_num = input('请输入你想采集的页数>>')

if int(input_page_num) > int(page_number):

page_number = int(page_number)

else:

page_number = int(input_page_num)

print('\n')

print('正在采集关键词挖掘数据,请稍后...')

print('\n')

datas_list=get_keyword_datas(keyword,page_number)

print('\n========================采集结束========================\n')

bcsj(keyword, datas_list)

附在流程图的 采集 部分:

部分结果图:

注:因站长首页网站改版,部分字段与原帖不同。以当前为准。最好能用浏览器访问,把代码中的cookies换成自己的就更好了。.

看到有朋友要exe脚本,我也不知道,但是本着为大家解决问题的态度,百度了一下发现还是蛮简单的,然后在过程中发现之前分享的代码是有时不完整。html代码原本只给post加了header,但是get请求忘了加headers,已经更正了。

这几天抽空再次回顾一下python,发现之前的代码有很多不必要的sleep,导致运行时间过长。我加了一个自定义的采集页码,目前速度贼快。我还重新包装了一个

下载:

密码:bbfx

我的 exe 文件是用 pyinstaller 打包的。打包的体积有点大,有需要的可以下载。