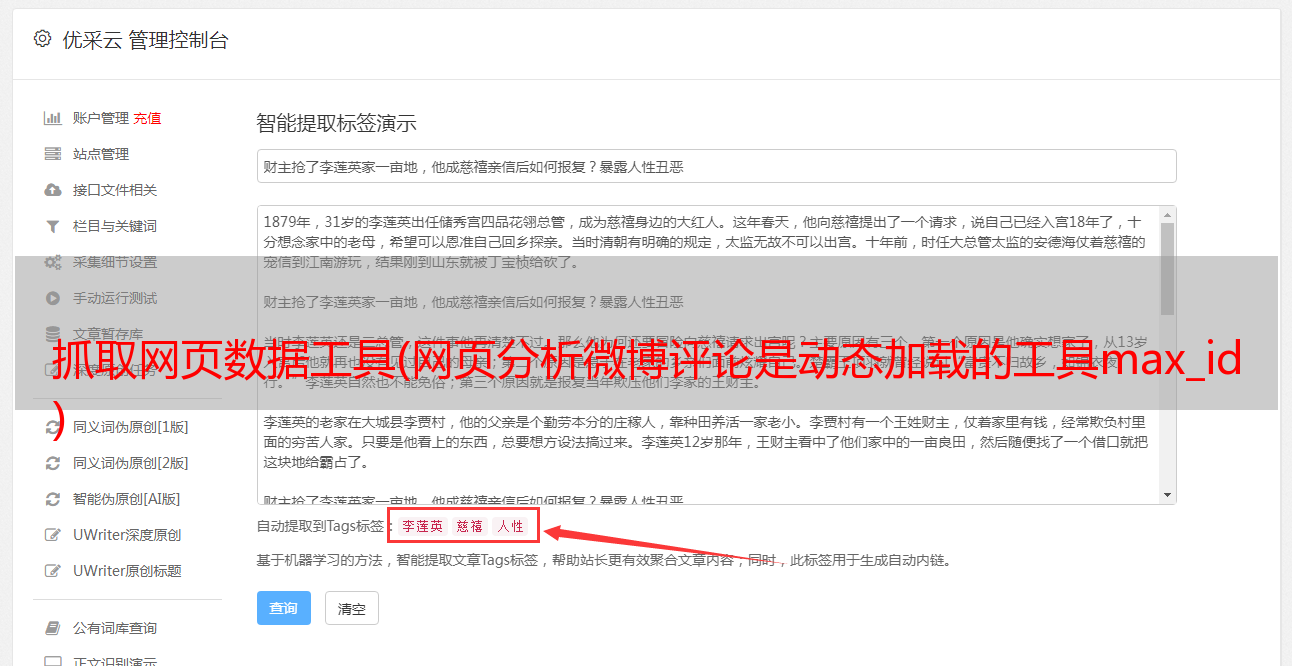

抓取网页数据工具(网页分析微博评论是动态加载的工具max_id )

优采云 发布时间: 2021-12-24 09:10抓取网页数据工具(网页分析微博评论是动态加载的工具max_id

)

网站链接

https://m.weibo.cn/detail/4669040301182509

网络分析

微博评论动态加载。进入浏览器的开发者工具后,在网页下拉即可得到我们需要的数据包

获取真实网址

https://m.weibo.cn/comments/hotflow?id=4669040301182509&mid=4669040301182509&max_id_type=0

https://m.weibo.cn/comments/hotflow?id=4669040301182509&mid=4669040301182509&max_id=3698934781006193&max_id_type=0

这两个 URL 之间的区别是显而易见的。第一个 URL 没有参数 max_id,第二个 URL 以 max_id 开头。max_id其实就是前一个包中的max_id。

但是有一点需要注意的是参数max_id_type,这个参数实际上是变化的,所以我们需要从数据包中获取max_id_type

代码

import re

import requests

import pandas as pd

import time

import random

df = pd.DataFrame()

try:

a = 1

while True:

header = {

'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/38.0.2125.122 UBrowser/4.0.3214.0 Safari/537.36'

}

resposen = requests.get('https://m.weibo.cn/detail/4669040301182509', headers=header)

# 微博爬取大概几十页会封账号的,而通过不断的更新cookies,会让爬虫更持久点...

cookie = [cookie.value for cookie in resposen.cookies] # 用列表推导式生成cookies部件

headers = {

# 登录后的cookie, SUB用登录后的

'cookie': f'WEIBOCN_FROM={cookie[3]}; SUB=; _T_WM={cookie[4]}; MLOGIN={cookie[1]}; M_WEIBOCN_PARAMS={cookie[2]}; XSRF-TOKEN={cookie[0]}',

'referer': 'https://m.weibo.cn/detail/4669040301182509',

'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/38.0.2125.122 UBrowser/4.0.3214.0 Safari/537.36'

}

if a == 1:

url = 'https://m.weibo.cn/comments/hotflow?id=4669040301182509&mid=4669040301182509&max_id_type=0'

else:

url = f'https://m.weibo.cn/comments/hotflow?id=4669040301182509&mid=4669040301182509&max_id={max_id}&max_id_type={max_id_type}'

html = requests.get(url=url, headers=headers).json()

data = html['data']

max_id = data['max_id'] # 获取max_id和max_id_type返回给下一条url

max_id_type = data['max_id_type']

for i in data['data']:

screen_name = i['user']['screen_name']

i_d = i['user']['id']

like_count = i['like_count'] # 点赞数

created_at = i['created_at'] # 时间

text = re.sub(r']*>', '', i['text']) # 评论

print(text)

data_json = pd.DataFrame({'screen_name': [screen_name], 'i_d': [i_d], 'like_count': [like_count], 'created_at': [created_at],'text': [text]})

df = pd.concat([df, data_json])

time.sleep(random.uniform(2, 7))

a += 1

except Exception as e:

print(e)

df.to_csv('微博.csv', encoding='utf-8', mode='a+', index=False)

print(df.shape)

显示结果